Code at AI speed.

Test with production confidence.

Use mirrord to instantly validate every change against your live staging environment — multiple agents, same cluster, no conflicts.

Windsurf, Antigravity,

others or in the CLI

needed

config needed

Windsurf, Antigravity,

others or in the CLI

needed

config needed

mirrord gives your AI agents instant, real-world feedback from your Kubernetes cluster

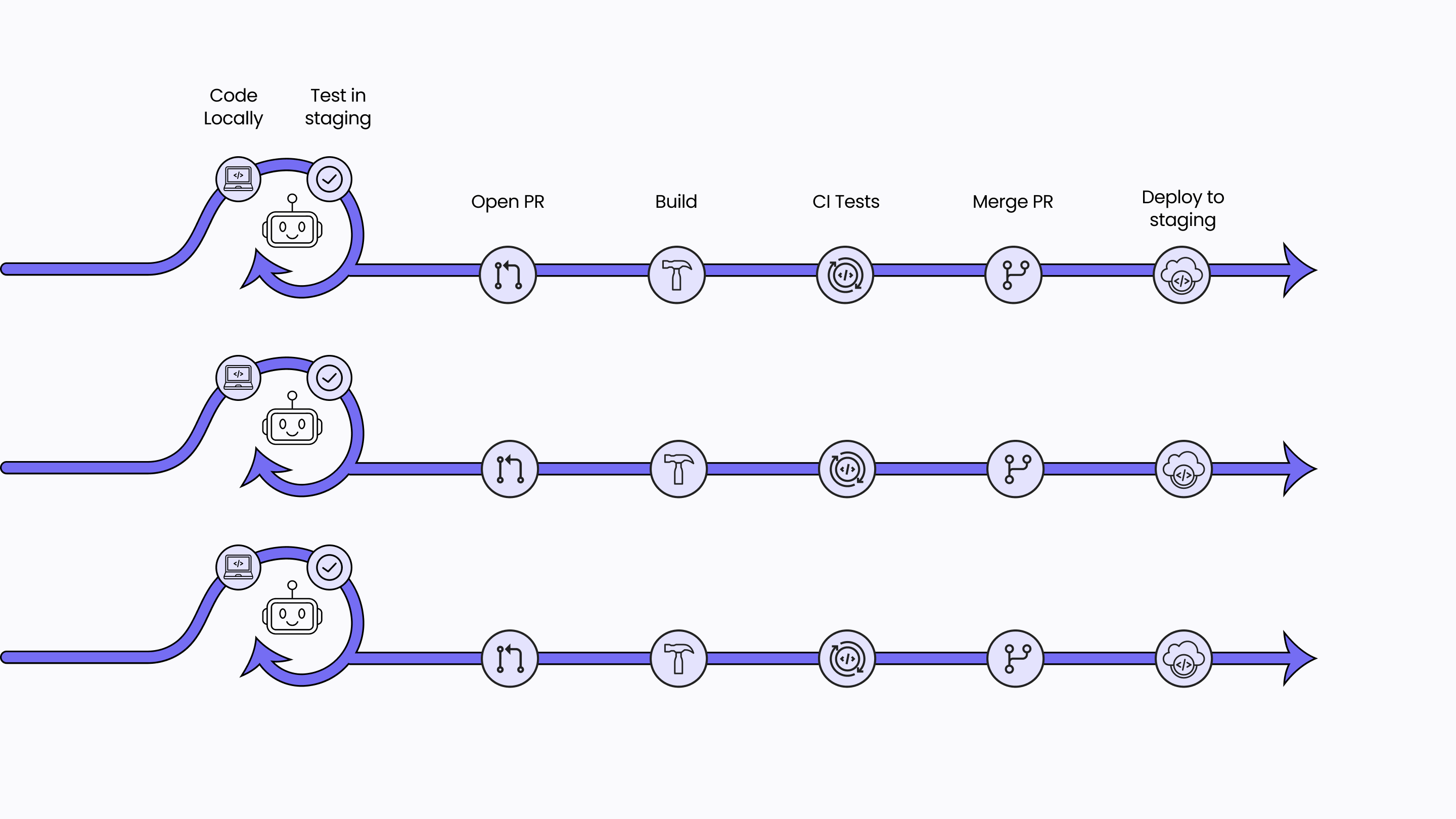

Before mirrord

AI generates code fast, but testing it means waiting for staging deployments

Local mocks fail to capture real-world conditions

Untested AI code often breaks the environment

Expensive, separate cloud environments for every dev

AI Agents lack the mechanisms to quickly and autonomously test their work across a live system

After mirrord

Test AI-generated code in cloud conditions within seconds

Live traffic, databases, and queues, no mocks required

Safe isolation to prevent breaking shared environments

One shared, cost-efficient staging cluster for the whole team

Run any number of AI agents concurrently against the same environment

With mirrord, any number of AI agents can test code

concurrently in the same environment.

Platform teams install the operator once. Every developer and every AI agent can connect to the real cluster through their own isolated layer.

AI has made it possible to generate

entire features in minutes

The bottleneck isn't building anymore — it's testing that code in a realistic environment.

THE PROBLEM

Staging environments can't keep up, local mocks miss critical bugs, and developers spend 15–30 minutes each time they test AI code.

THE SOLUTION

Cut testing time from 30 minutes to 30 seconds. mirrord closes that gap by letting you run local code with live cloud traffic and services, instantly.

↑ 50%

faster feedback loops

Developers don't have to redeploy to test AI-generated code in the cloud.

↓ 80%

lower cloud costs

By eliminating redundant cloud dev environments.

↓ 50%

decrease in CI runs

Less downtime and lower cloud spend.

FAQ.

How does mirrord work with AI agents?

Can multiple AI agents and engineers test concurrently on the same cluster?

Can mirrord take an agent from code generator to something closer to an autonomous developer?

How much does it cost?

mirrord for Teams ($40/seat/month, paid annually) adds the Operator for concurrent use: queue splitting, database branching, traffic filtering, RBAC, and session management.

Enterprise (custom pricing) adds CI pipeline support, preview environments, airgapped clusters, high availability, and dedicated support.

No credit card required to start a free trial. See full pricing here, or reach out to book a demo.

Tired of deploying to staging just

to test AI-generated code?

Start testing in the Cloud, right from your Local Machine.

Windsurf, Antigravity,

others or in the CLI

to get started

configuring anything